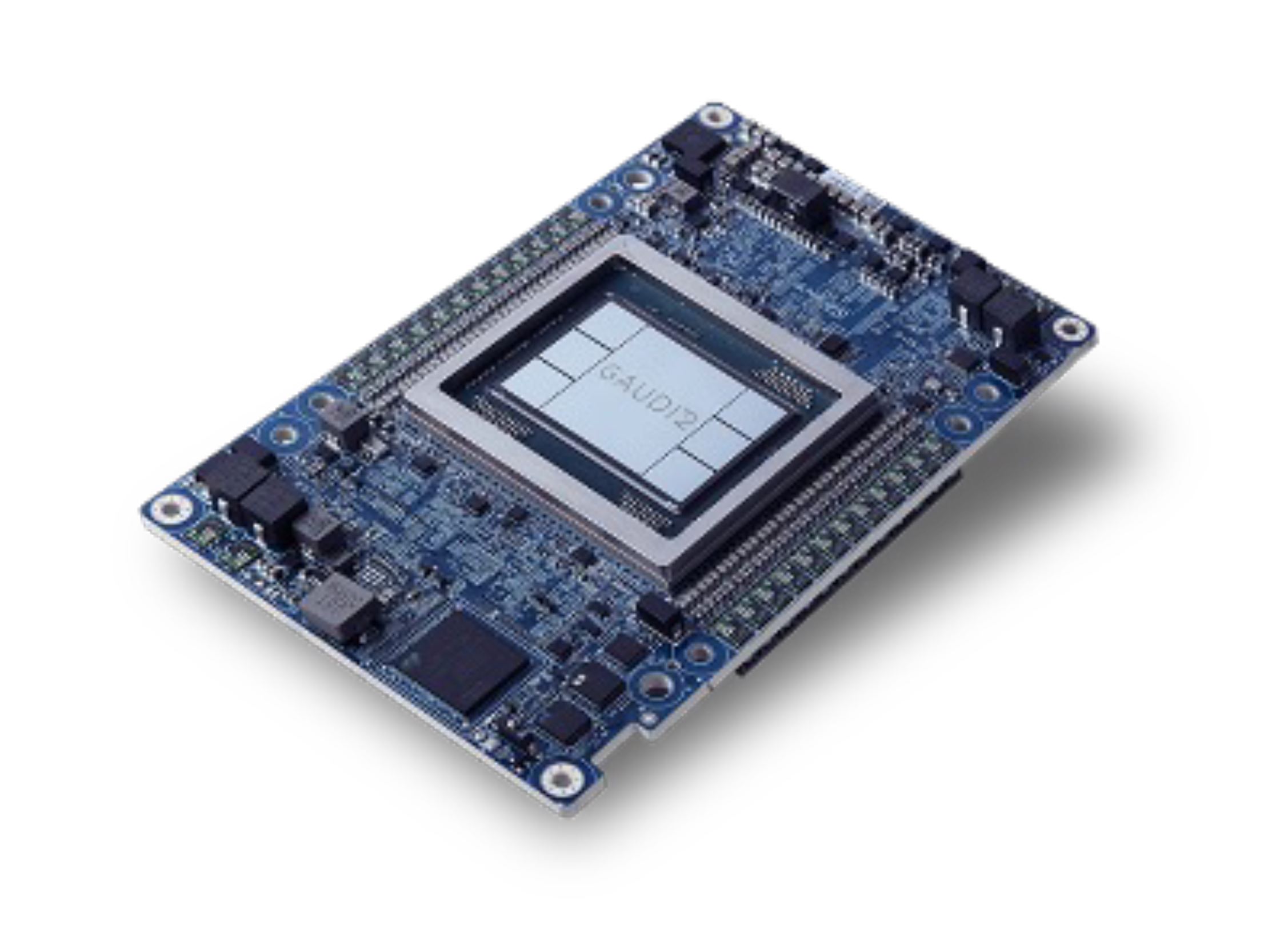

Gaudi 2 Specifications

Gaudi 2 supports all popular data types required for deep learning: FP32, TF32, BF16, FP16 & FP8 (both E4M3 and E5M2).

96 GB

HBM2e Memory

Optimized capacity for FP8 models with large context window and batch.

2.4 Tbps

RoCE v2 Bandwidth

Fast GPU interconnect for training and multi-node inference.

865

TFLOPS FP8

Superior token cost and performance at FP8 precision.

2.8X

Faster Inference

vs A00 at FP8 performance of A100, and 1.4x at BF16.

Intel Gaudi 2 on Denvr AI Cloud

Best Price-Performance

96 GB HBM2e at lowest price point. Near-H100 performance on supported models for teams optimizing cost per token.

LLM Training & Inference

Native support for PyTorch and Hugging Face Optimum. Train and serve popular open-weight models including Llama, Mixtral, and Qwen.

Alternate Silicon

Evaluate non-NVIDIA accelerators to maximize your AI compute budget. Train or operate models via vLLM serving engine.

Managed Storage

High-performance Weka filesystem and local NVMe available. No external storage to provision for datasets, checkpoints, or model artifacts.

Platform

GPUs

GPU VRAM

vCPUs

Memory

Local Storage

Interconnect

On-Demand

Intel Gaudi 2

8

96 GB

160

1024 GB

4x 7.6TB NVMe

-

$1.25 / GPU

Configurations

Per-minute billing with on-demand and reserved options. All configurations available as bare metal, VM, or model endpoints.

Related GPUs

Compare Denvr GPU options by workload and performance requirements.

Optimized For

Distributed training, multi-node scaling

Cost-effective inference, fine-tuned models

Large model training, high-throughput inference

VRAM

80 GB

96 GB

80 GB

Memory Bandwidth

2,039 GB/s

2,450 GB/s

3,350 GB/s

FP64/FP32

19.5 TFLOPS

-

67 TFLOPS

FP16

312 TFLOPS

432 TFLOPS

1,979 TFLOPS

FP8

-

865 TFLOPS

3,958 TFLOPS

NVLink

600 GB/s

-

900 GB/s

On-Demand Pricing

$1.35 / GPU

$1.25 / GPU

$2.30 / GPU

Infrastructure you can trust at scale

As an Intel Partner Alliance member we build and operate AI clusters following vendor reference architectures. Your models and data are supported via strict privacy safeguards and SOC 2 Type 2 security practices.