A100 Specifications

The A100 is ideal for large-scale model training and inference, providing 80-90% of the capabilities of newer H100s for many workloads at a lower cost.

80 GB

HBM2e Memory

Run 70B+ parameter models on a single GPU.

600 GB/s

NVLink Bandwidth

Third-gen NVLink for multi-GPU scaling.

312

TFLOPS FP16

624 TFLOPS with sparsity enabled.

19.5

TFLOPS FP64

Double-precision hardware for scientific and HPC workloads.

NVIDIA A100 on Denvr AI Cloud

Cost Effective

Available in 80 GB, 40 GB, and 20 GB configurations. Match GPU capacity to your actual workload and avoid overpaying.

Proven Ecosystem

The most widely supported data center GPU in the ML stack. Full compatibility with PyTorch, TensorFlow, JAX, vLLM, and every major training and serving framework.

Distributed Training

TMulti-node scaling with InfiniBand for distributed workloads that don't require H100-class throughput.

Managed Storage

High-performance Weka filesystem and local NVMe available. No external storage to provision for datasets, checkpoints, or model artifacts.

Platform

GPUs

GPU VRAM

vCPUs

Memory

Local Storage

Interconnect

On-Demand

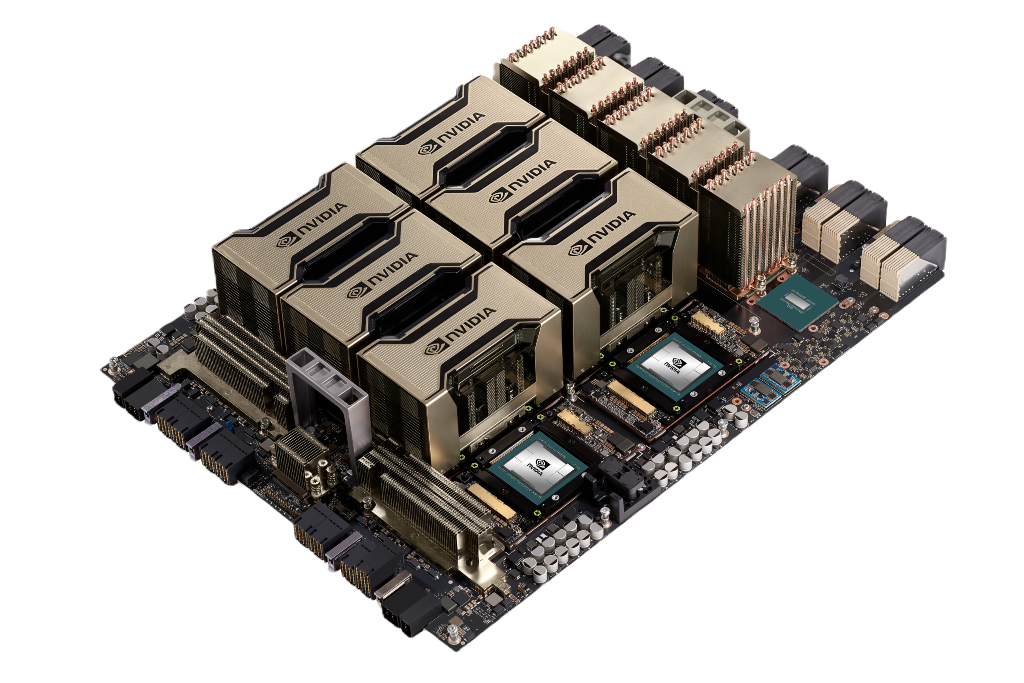

NVIDIA A100 SXM

8

80 GB

208

1024 GB

6x 3.8TB NVMe

IB 1600G

$1.35 / GPU

NVIDIA A100 SXM

8

40 GB

128

1024 GB

4x 3.8TB NVMe

IB 800G

$1.15 / GPU

NVIDIA A100 PCIe

4

40 GB

64

512 GB

2x 3.8TB NVMe

-

$1.15 / GPU

NVIDIA A100 MIG

1

20 GB

5

55 GB

-

-

$0.58 / GPU

Configurations

Per-minute billing with on-demand and reserved options. All configurations available as bare metal, VM, or model endpoints.

Related GPUs

Compare Denvr GPU options by workload and performance requirements.

Optimized For

Cost-effective inference, fine-tuned models

Distributed training, multi-node scaling

Large model training, high-throughput inference

VRAM

96 GB

80 GB

80 GB

Memory Bandwidth

2,450 GB/s

2,039 GB/s

3,350 GB/s

FP64/FP32

-

19.5 TFLOPS

67 TFLOPS

FP16

432 TFLOPS

312 TFLOPS

1,979 TFLOPS

FP8

865 TFLOPS

-

3,958 TFLOPS

NVLink

-

600 GB/s

900 GB/s

On-Demand Pricing

$1.25 / GPU

$1.35 / GPU

$2.30 / GPU

Infrastructure you can trust at scale

As an NVIDIA Cloud Partner we build and operate AI clusters following NVIDIA Reference Architectures. Your models and data are supported via strict privacy safeguards and SOC 2 Type 2 security practices.